Are Large Language Models the key to helping each of us discover our own personal Move 37?

Intelligence is a powerful word, but it has many definitions. These multiple definitions entangle the already complex question of what intelligence really is.

We know that humans are the most intelligent species on Earth. Biology, neuroscience, psychology, and many other fields have developed different theories about how our minds work in order to understand this intelligence.

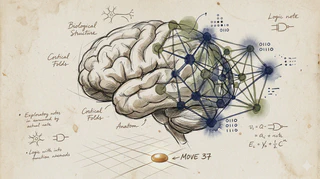

However, unexpectedly, Large Language Models (LLMs) may give us our best hint yet about how human brains work. Assume that an LLM is an approximation of the brain, like a “digital twin” of how language functions within us. By inspecting its structure, weights, activations, and accuracy, we can formulate new hypotheses to build a more precise neurological model.

As we integrate reinforcement learning into the equation of LLMs, we may also learn more about the control aspects of human behavior. Why do we act the way we do? How do we act optimally when solving complex problems? We might even discover hidden potentials within the human brain. The same applies to large vision models, which could help us understand how we process sensory information.

Even though we have studied the brain for many years, much remains unknown. I think these large models may give us significant clues about how human intelligence works.

If we discover a better model of our cognition, we may unlock hidden potential in the human mind. That could be one of the most impactful discoveries of the 21st century, leading to cures for diseases, improved learning efficiency, and better decision-making. It is a breakthrough that could profoundly benefit a worldwide population of approximately 8.3 billion people.

Just as AlphaGo generated Move 37, an unexpectedly brilliant and efficient play, these models may enable every human to generate their own Move 37, introducing an exciting new era of breakthroughs.

This text was based on the great paper by Terrence Sejnowski, “Large Language Models and the Reverse Turing Test” (2023)